Why Agentic AI Needs a New Architecture

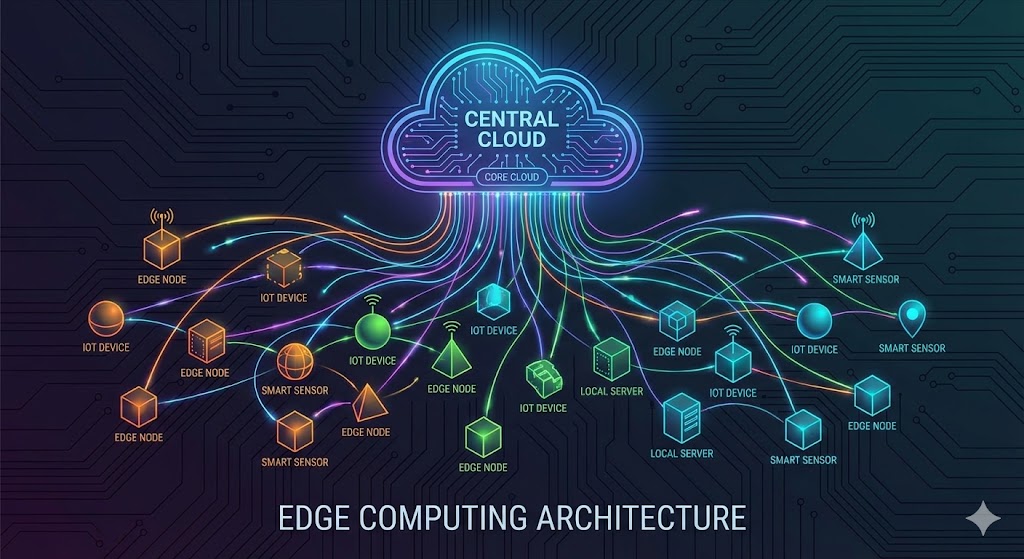

Most multimodal models today are optimized for static vision or instruction following. But computer-use agents — models that perceive, decide, and act in interactive environments — demand something different: high throughput, long context handling, and efficient scaling under concurrency.

Enter Holotron-12B, a 12-billion parameter model from H Company, post-trained from NVIDIA's open Nemotron-Nano-2 VL model. It's designed from the ground up for production agentic workloads. The model is now available on Hugging Face under the NVIDIA Open Model License.

Holotron-12B is part of the NVIDIA Inception Program, and its development shows how far a strong base model can go with the right training data and infrastructure. Let's break down what makes it special.

The Secret Sauce: Hybrid SSM-Attention Architecture

The core innovation in Holotron-12B is its hybrid State-Space Model (SSM) and attention mechanism. Unlike pure transformer models that suffer from quadratic memory costs (the infamous KV cache), SSMs store only a constant state per layer per generated sequence — independent of sequence length.

This dramatically reduces memory footprint. In practice, it means:

- Longer context windows without memory explosion

- Higher effective batch sizes on the same hardware

- Better VRAM utilization — less wasted memory

Real-World Throughput Results

H Company benchmarked Holotron-12B against its predecessor Holo2-8B on the WebVoyager Benchmark, a realistic multimodal agentic workload with long contexts, multiple high-resolution images, and 100 concurrent workers. Running on a single H100 GPU with vLLM v0.14.1 (SSM-optimized), the results were striking:

| Metric | Holotron-12B | Holo2-8B |

|---|---|---|

| Max throughput (tokens/s) | 8,900 | 5,100 |

| Throughput improvement | 2x | — |

| Scaling efficiency at high concurrency | Continues rising | Plateaus quickly |

# Conceptual example: SSM vs Attention memory footprint

# For a sequence of length L and hidden dimension d:

# Attention: O(L^2 * d) memory

# SSM: O(1 * d) memory per layer (constant state)

def attention_memory(L, d):

return L * L * d # Quadratic

def ssm_memory(d):

return d # Constant

L = 10000 # Long context

print(f"Attention: {attention_memory(L, 4096):,} units")

print(f"SSM: {ssm_memory(4096):,} units")

This makes Holotron-12B ideal for throughput-bound workloads like data generation, annotation, and online reinforcement learning.

Training Recipe & Benchmark Performance

Holotron-12B was trained in two stages:

- Start from NVIDIA's Nemotron-Nano-12B-v2-VL-BF16 — a multimodal base model

- Supervised fine-tuning on H Company's proprietary localization and navigation data — focusing on screen understanding, grounding, and UI-level interactions

The final checkpoint was trained on approximately 14 billion tokens.

Agent Benchmarks

| Benchmark | Nemotron Base | Holotron-12B | Holo2-8B |

|---|---|---|---|

| WebVoyager | 35.1% | 80.5% | ~70% |

| OS-World-G | — | Strong improvement | — |

| GroundUI | — | Strong improvement | — |

| WebClick | — | Strong improvement | — |

The jump from 35.1% to 80.5% on WebVoyager is remarkable — a testament to the effectiveness of the proprietary training data and the hybrid architecture.

Limitations & Caveats

While Holotron-12B is impressive, it's not without trade-offs:

- SSM models can struggle with certain recall tasks that pure attention handles natively. The hybrid design mitigates this, but it's not a silver bullet.

- The model is still 12B parameters — not tiny. Inference requires a capable GPU (H100 recommended).

- Licensing is NVIDIA Open Model License — not fully open. Check terms before commercial use.

- Training data is proprietary — you can't reproduce the exact model from scratch.

What's Next: Nemotron 3 Omni

NVIDIA has already announced Nemotron 3 Omni, the next generation of multimodal models. H Company will post-train on top of it, leveraging enhanced hybrid SSM-Attention and MoE (Mixture of Experts) architecture. This promises even greater reasoning capabilities and multimodal precision, pushing Holotron beyond research into commercial-scale autonomous "computer use" deployments.

For more on how agentic AI is transforming enterprise workflows, check out our deep dive on Agentic AI and Cloud Migration in Regulated Industries. And if you're interested in how similar architectural innovations apply to recommendation systems, see How Netflix Optimized Its Recommendation System Using the JDK Vector API.

Next Steps for Developers

- Try the model: Download from Hugging Face

- Benchmark your own workload: Use vLLM with SSM support (v0.14.1+) to test throughput

- Explore hybrid architectures: SSM-attention hybrids are becoming mainstream — keep an eye on Mamba, Jamba, and Nemotron families

- Watch for Nemotron 3 Omni: It will likely redefine what's possible for computer-use agents

Conclusion

Holotron-12B is a clear signal that hybrid SSM-attention architectures are ready for production. It delivers:

- 2x throughput over a strong baseline (Holo2-8B)

- 80.5% WebVoyager accuracy — near state-of-the-art for computer-use agents

- Efficient scaling under high concurrency — ideal for real-world agentic workloads

The collaboration between H Company and NVIDIA shows that open base models + proprietary fine-tuning can produce world-class results. As the industry moves toward autonomous agents that can browse the web, control GUIs, and execute complex workflows, models like Holotron-12B will be foundational.

The era of agentic AI is here — and it runs on SSMs.