The Inference Trilemma: Scale, Latency, and Cost

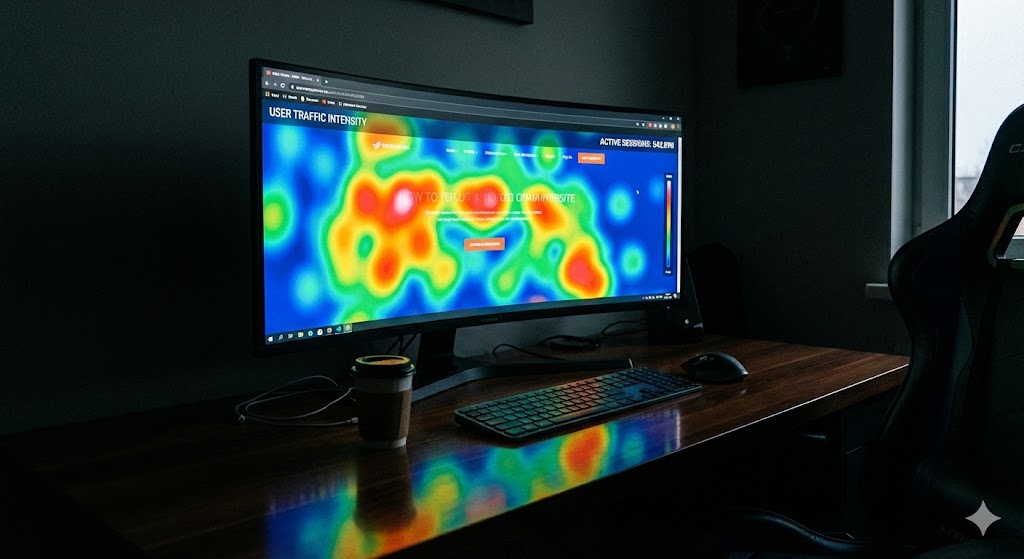

Building AI recommendation systems at the scale of billions of users presents a fundamental challenge: the 'inference trilemma.' How do you increase model complexity to LLM-scale for deeper user understanding, while simultaneously maintaining the sub-second latency critical for user experience and keeping computational costs sustainable? Brute-force scaling hits a wall, as simply adding hardware is economically and technically infeasible.

Meta's answer is the Adaptive Ranking Model, a paradigm shift in real-time AI serving. Instead of a one-size-fits-all model, it intelligently routes each ad request to the most effective and efficient model variant based on real-time user context. This breakthrough, detailed in the official engineering blog, hinges on three core innovations that redefine what's possible at production scale.

The Three Pillars of LLM-Scale Efficiency

1. Inference-Efficient Model Scaling: From Linear to Sub-Linear

Traditional models waste computation by processing each user-ad pair independently. The Adaptive Ranking Model introduces Request-Oriented Optimization. It computes dense user signals (like long behavior sequences) once per request and shares the results across all ad candidates. This is achieved through:

- In-Kernel Broadcast: Sharing request-level embeddings directly within GPU kernels, slashing memory bandwidth pressure.

- Centralized Feature Store: Replacing redundant data copies with a high-efficiency key-value store, joined with training data on-the-fly.

This transforms computational scaling from linear (O(n)) to sub-linear, a prerequisite for handling LLM-scale complexity within a strict ~100ms latency budget.

2. Deep Model-System Co-Design: Maximizing Hardware ROI

You can't just drop a massive model onto existing hardware. This model was co-designed with the silicon it runs on.

- Selective FP8 Quantization: Instead of blanket low-precision, a micro-benchmark guides FP8 application only to layers tolerant of precision loss, preserving quality while boosting throughput.

- Hardware-Aware Kernel Fusion: Thousands of small operations are fused into compute-dense kernels (e.g., using Grouped GEMM). This minimizes costly memory accesses and aligns the computation graph perfectly with modern GPU architectures, boosting Model FLOPs Utilization (MFU) to 35% across heterogeneous hardware.

3. Reimagined Serving Infrastructure: Breaking Memory Walls

When model parameters approach a trillion, they exceed the memory of any single GPU.

- Multi-Card Embedding Scaling: Embedding tables are sharded across a GPU cluster with hardware-optimized communication, achieving performance parity with single-card setups.

- Trillion-Parameter Scale via Smart Allocation: Embedding hash sizes are dynamically allocated based on feature sparsity, and unused embeddings are pruned. Unified embeddings allow multiple features to share a table, maximizing learning capacity within a fixed memory budget.

Trade-offs, Limitations, and the Road Ahead

| Advantage | Consideration / Challenge |

|---|---|

| Sub-second LLM-scale inference | Extreme system complexity; requires deep, vertical integration from silicon to software stack. |

| High hardware utilization (35% MFU) | Optimization is highly hardware-specific; porting to new architectures (e.g., different GPU vendors, AI accelerators) requires significant re-engineering. |

| Dynamic request routing | Introduces routing logic overhead and potential for routing errors, requiring robust online validation systems. |

| Cost-efficient scaling | The upfront R&D and co-design investment is enormous, making this approach primarily viable for hyperscalers. |

The Path Forward: Meta's roadmap points towards greater autonomy: agentic frameworks for automatic kernel optimization, near-instant model updates for real-time adaptation, and advanced compression to run sophisticated models on diverse global hardware. The goal is an infrastructure that autonomously adapts to fluctuating traffic and user signal patterns.

Key Takeaways and Your Next Steps

The Adaptive Ranking Model is less about a single algorithm and more about a holistic systems engineering philosophy. It proves that the next frontier of AI performance isn't just in novel architectures, but in obliterating the boundaries between model design, software runtime, and hardware.

For Practitioners & Architects:

- Think Systems-First: Before chasing model complexity, audit your inference stack for redundancy (like per-candidate repeated computation) and memory bottlenecks.

- Embrace Heterogeneity: Design for mixed-precision execution and hardware diversity from the start. A one-size-fits-all precision or kernel strategy is inefficient.

- Plan for Scale Out, Not Just Up: When models outgrow a single device, a sharding strategy is non-negotiable. Design your data flows and communication layers accordingly.

This approach mirrors the architectural mindset needed for building resilient, large-scale systems, similar to the principles discussed in this guide on designing for high availability and sovereignty in cloud architectures. Both require deep co-design of application logic and infrastructure constraints.

To dive deeper into the technical foundations and see the full scope of innovations, explore the original engineering blog post.

What to Learn Next: To operationalize complex ML models at scale, familiarize yourself with MLOps frameworks that manage the full lifecycle. Exploring tools that accelerate iterative development, like those discussed in trends around Metaflow's Spin feature, can provide practical stepping stones toward building more efficient and agile ML systems.